LinkedIn’s algorithm underwent some pretty significant changes in May 2022. LinkedIn introduces constraints in its algorithm and takes into account more explicit variables. This has had a substantial impact on the visibility of posts. Although the basic functioning of the LinkedIn algorithm is not changed, we analyze these announcements for you.

The goals of LinkedIn’s algorithm changes

- The rules for highlighting posts have not changed. Engagement is still the number one criterion for the algorithm. However, LinkedIn introduces mechanisms to penalize certain content.

- Penalization mechanisms are based primarily on so-called explicit variables (activated by the user)

- A new mechanism, based on an implicit variable, aims at penalizing authors who ask for likes or comments in their messages. This mechanism is likely based on simple “business rules” (see here for a detailed explanation)

- However, the changes are not without consequences since they risk creating a filter bubble

- All the changes aim at favoring quality content and those that generate interactions.

The changes in brief

The changes announced on the LinkedIn blog are multiple:

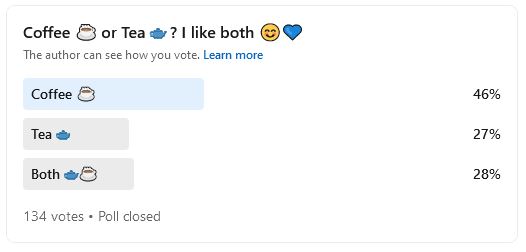

Fewer surveys in the LinkedIn feed

Users had complained about the omnipresence of polls in their news feed. As is often the case when a new feature is launched, LinkedIn tends to promote its use by artificially increasing its visibility. As a result, polls could get thousands of views without any effort, becoming prevalent. They were used indiscriminately without those who created them understanding that the actual value of LinkedIn is in the interaction. Meaningless polls invaded users’ news feeds to the point where there was a groundswell of protest.

Less political content

The polarization of ideas fuels the fragmentation of society. This is the phenomenon of archipelitisation described by sociologist J. Fourquet. LinkedIn has become a platform for political ideas like all other social networks. There was a particular urgency to “protect” users of this content, not to power heated exchanges. This is now possible if you are based in the United States.

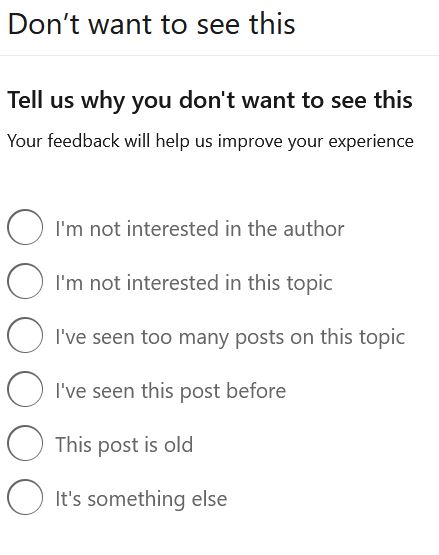

Report unwanted content and authors

Report unwanted content and authors

A significant change is the ability for users to report content that bothers them without breaking the platform’s rules. What’s new is that LinkedIn’s algorithm now takes these explicit feedbacks into account. You can see below that the options left to give feedback to the algorithm are pretty varied. Several feedback loops had to be implemented to feed the LinkedIn algorithm. Underneath a reasonably simple appearance, however, the use of this type of feedback remains quite complicated.

For example, using the “I’ve seen too many posts on this topic” feedback implies that each post is analyzed with an NLP algorithm to “tag” it, i.e., to determine the related topic(s). This type of algorithm works quite well with lengthy content, but when you consider that 50% of posts contain less than 39 words, you might reasonably question the accuracy of the process.

Under a harmless appearance, the category “this post is old” can effectively detect plagiarism. Indeed, it is not uncommon for some users to take over viral posts. This is what happened with the famous post “do you see the panda?”

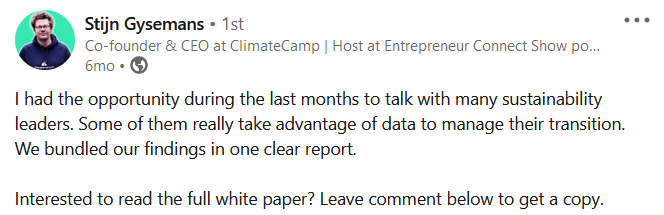

How will LinkedIn detect “calls” for likes or comments?

“Leave a comment if you want to receive my free guide.” This technique is quite classic for boosting the popularity of a post artificially. And LinkedIn has decided to tackle it. In the announcement on its blog, LinkedIn explains:

“We’ve seen several posts that expressly ask or encourage the community to engage with content via likes or reactions – posted to boost reach on the platform. We’ve heard this type of content can be misleading and frustrating for some. We won’t be promoting this type of content, and we encourage everyone in the community to focus on delivering reliable, credible, and authentic content.”

The immediate question is: How will LinkedIn spot this type of content? Without having a crystal ball, it’s reasonable to assume that LinkedIn will use a business rule here again.

Posts that promote this type of behavior almost always use the same wording. This makes it easy for LinkedIn to spot the typical phrases and penalize these posts. This will have a convenient consequence for all users. You must be careful not to use this sequence of words at the risk of seeing your post, even legitimate, become invisible.

Value-added content to increase interaction and time spent

All the changes announced by LinkedIn will give back a little control to the user to improve his news feed. The reasons for dissatisfaction that have been recurring over the last few months can thus potentially be mitigated. However, the real challenge will remain for LinkedIn to collect enough explicit feedbacks to exploit them.

LinkedIn promises to use its recommendation algorithm to personalize each news feed. Therefore, it is not possible to use the feedback of a limited number of people to extrapolate them to the rest of the population. Which population, by the way? Based on which criteria should homogeneous groups of users be formed?

We understand that LinkedIn’s goal is to encourage interaction (in the form of comments). This goal is quite commendable. The exchanges are healthy and desirable. The benefit to the platform is obvious. The more network members interact, the more time they spend on LinkedIn (and less on Meta, Tik Tok, etc. …).

Conclusion: What to retain from these new changes in the LinkedIn algorithm?

The new changes to the LinkedIn algorithm aim to improve user satisfaction. In particular, they should identify certain deviant behaviors and penalize them. To spot them, LinkedIn gives back more control to the user in the form of “filters” to customize the news feed. Their use will send clear signals to the algorithm. Two challenges await LinkedIn:

- getting users to use them

- avoiding the filter bubble by reducing the scope of recommendations in the news feed