![ETL: comparison, selection criteria, advice [guide 2022]](/blog/app/uploads/ETL-extract-transform-load.jpg)

ETL: Extract – Transform – Load. These 3 words alone summarize the functionalities that software solutions belonging to this family must fulfil. THEREFORE, an ETL tool is a software that simplifies data preparation, local or on the cloud. The latter focuses on realizing 3 types of operations on data: (1) extracting them from files of different natures; (2) transforming and enriching them to prepare them for exploitation; (3) reloading them into another system so that they can be valued.

The graphic interface of ETL solutions simplifies these operations. These solutions are based on “boxes” that can be assembled to achieve the desired result. It is, therefore, a “No Code” solution that can be put in (almost) all hands.

In this article, I explain everything you need to know about ETL and give you my personal opinion to help you in your choice. This article is not commercial (I don’t sell ETL) but only presents an objective point of view.

Summary

- Origin of ETLs

- ETLs on the market

- Functionality 1: extraction

- Functionality 2: transformation

- Functionality 3: loading

- Additional functionalities of ETL+ tools

- Which ETL tool to choose?

ETL: What is their origin

The history of ETLs began in the 1980s. At that time, specific software solutions appeared to manage flows between “simple” databases. In the 90s, a shift was made to adapt them to data-warehouses (DWH).

In the 2000s, the market became more structured, and ETLs were divided into two categories:

- ELTs: some ETLs become ELTs and focus solely on the “E” and “L” (Extract and Load) tasks, leaving the “T” to the database engine. Examples: Talend, Matillion, etc.

- The complete ETLs: more “T” functionalities were added: data cleaning, slightly more complex joins. Some of these tools still exist in 2022. For example, IBM data stage, Ab Initio.

Around 2010, we see an evolution of ETLs to manage a situation where there is no database engine “behind.” From then on, ETLs became a little outdated. This is the era of the “data lake.” The data lake is a new concept optimized for business/data analysts and data scientists with more advanced data needs

Thanks to the data lake, ‘full ETLs’ become what I call ETL+. New functionalities are added to them to allow them to do more complex things: text mining, data mining (machine learning), AI, big data, etc. In this category, we find the ETL tools that are, in my opinion, the best of the bunch: Anatella and Alteryx.

ETLs on the market

There are far too many ETLs on the market to mention them all. I will mention 3 that I use daily in the following few paragraphs: Anatella, Alteryx, and Tableau. I could have added Talend, probably the best-known solution on the market, but I don’t use it much, and I’m not a big fan of it (primarily because of the performance problems I mentioned here).

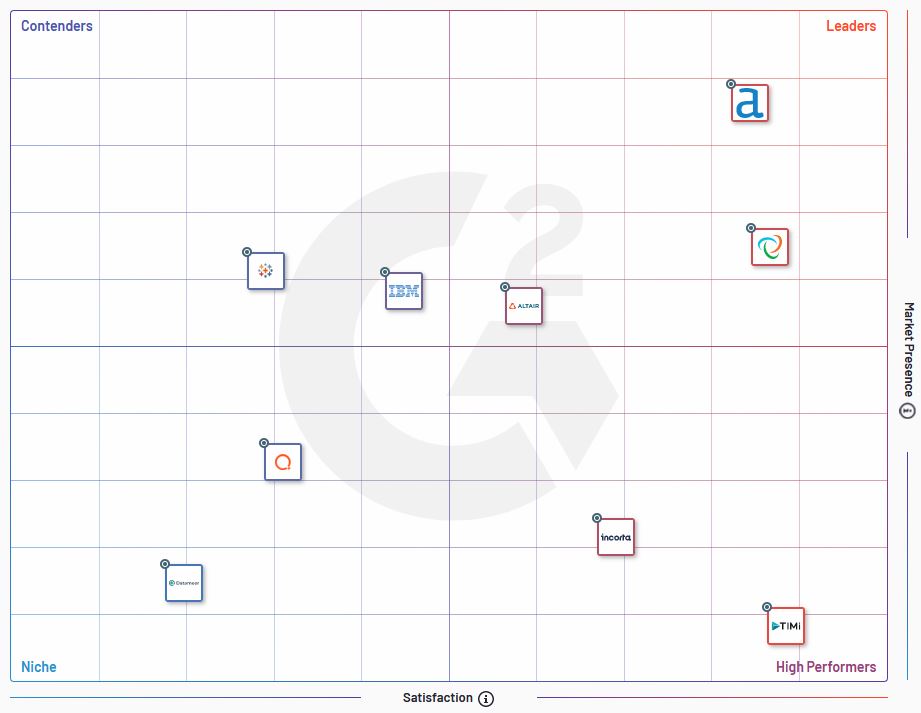

Let’s also mention that companies like G2 publish benchmarks of ETL solutions on the market. Like the one below, Positioning maps are handy to assess the different solutions on the market.

Functionality n°1: Extract

The first functionality of ETLs is to extract data from where it is located. The term “extract” is perhaps reductive here because it is about the ability to open a file and make the data it contains available to be used in subsequent processes.

The emergence of SaaS solutions and the democratization of cloud storage have made data extraction a challenge. There have never been so many different data sources and formats. Some proprietary formats are so specialized that a handful of ETLs only proposes them. What’s also fun is the ability to read “competing” files. Anatella, for example, has a “box” that can read Alteryx files.

Let’s not even talk about unstructured files (pdf, word), which are a real challenge and require transformations to be exploited (see next paragraph).

| ETL | Number of “boxes” to input data management | Remarks |

| Alteryx 2020.1.5.25447 | 4 | |

| Tableau Prep | 57 | The list of formats supported by Tableau Prep is really impressive. |

| Anatella 2.38 | 37 | Anatella also handles unstructured input formats, which can sometimes be very useful. |

Functionality n°2: Transform

Even if you have the best solution in the world to extract data, if you can’t do what you want with it, it’s useless. In this respect, some ETLs are clearly distinguished by the number of functions (the famous “boxes”) available. In my opinion, the “Transform” features are the ones that add the most value to an ETL.

I overview the number of boxes available to “transform” the data in the table below. I have counted the “boxes” whose functionalities fall into one of the following categories: cleaning, join, data formatting.

| ETL | Number of transformations available | Remarks |

| Alteryx 2020.1.5.25447 | 33 | The counted boxes belong to the categories “preparation,” “join,” “transform” of the main menu. For some of the boxes in the “preparation” menu, it is debatable whether they are “transformative” or not, but to keep it simple, I have taken them all into account. |

| Tableau Prep | 5 | Tableau Prep is very basic in terms of transformations. It seems to be a data preparation tool whose sole purpose is to serve visualizations using Tableau. If calculations have to be made and variables transformed, we would instead use the calculation fields in Tableau. It is, therefore, no longer a question of No Code. |

| Anatella 2.38 | 50 | Without a doubt, the richest solution in terms of the range of functionalities for transformations. |

Certain transformation features can make the difference between 2 ETLs

As far as I’m concerned, the difference between 2 ETLs is in the data transformation capabilities. The programmers will tell me that this is not important, but I don’t think so. An ETL is a Low Code (or No Code) tool and is therefore meant to simplify the work of non-technical staff. The number of features available natively is consequently an essential criterion in my eyes.

For example, I invite you to read this article dedicated to fuzzy matching. Depending on the tool you will use, fuzzy matching will be more or less complicated to set up. However, those who have already reconciled databases know how time-consuming quality problems can be. A native fuzzy matching function (especially when different algorithms are available) is a real plus.

Functionality n°3: Load

Of the 3 letters in “ETL,” the “L” is the one that seems to me to present the least criticality. The most important thing for ETL is to have sufficient export possibilities to standard exchange formats. Indeed, data preparation with ETL tools is often only the starting point of other processes (predictive modeling, data mining, data visualization) performed in specialized solutions. These solutions always have input connectors.

When the data preparation/transformation process runs continuously, the situation is slightly different. It can be interesting to have export possibilities to owner formats in this case. For example, Anatella proposes an export in the proprietary format of Tableau (.hyper), which saves time when reading the data. This avoids problems when importing the data into a third-party solution.

| ETL | Number of available output formats | Remarks |

| Alteryx 2020.1.5.25447 | 5 | |

| Tableau Prep | 3 | The output formats are minimal. In fact, Tableau Prep is designed as a tool to prepare data for visualizations using Tableau. |

| Anatella 2.38 | 27 | Again, many output formats are available, even to very exotic or little-used designs. If one of them is important to you, it could be a strong argument in your decision. |

Additional features

As explained in the “History” section, ETLs have evolved to include certain functionalities that went beyond the specific framework of the Extract – Transform – Load diagram. This is the case for analysis or visualization functionalities. The “ETL+” that proposes them deserves to be considered.

Indeed, far from being gadgets, these additional functions proposed within the ETL tool are boosters. They allow you to short-circuit the analysis process by anticipating specific steps in the data preparation process.

You have to define your needs beforehand to appreciate the value of such features. Some of them may indeed be anecdotal and will not be useful to you. So be careful not to fall into the marketing trap and buy components that are of no interest.

As an example, here are some of the additional features I appreciate the most in the Anatella solution:

- NLP: this feature was initially developed for me (as part of my research work on virality on LinkedIn)

- Language detection: beneficial when you work on unstructured data

- Visualizations with R: Anatella embeds R code that allows making simple visualizations quickly. These visualizations can be used to have a first idea of the data and realize quality controls. I use them to check that no data has been lost in the data preparation process.

Which ETL tool to choose?

So, you need to choose the ETL that best suits your specific needs. This is obvious. Each ETL solution has its specificities, and the comparisons that I realized above are only one key of reading among others.

Beyond the range of functionalities, you should also consider the speed of data preparation processes. I realized a benchmark of 4 tools here, and the differences in processing time were considerable.

In the end, I think that there are 2 essential objective criteria to take into account:

- the functionalities

- speed

In addition, there are more subjective aspects, such as the publisher’s product roadmap and the latter’s customer orientation. From this point of view, my preference goes clearly to Anatella. The company behind it is very responsive and does not hesitate to develop specific solutions for your needs. I doubt that companies like Talend or Alteryx do the same.

![Illustration of our post "Free Generative AI Detectors: Which Ones to Choose? [Complete Test 2025]"](/blog/app/uploads/sherlock-holmes-120x90.webp)

![Illustration of our post "LinkedIn remains under-used by marketing managers [Research]"](/blog/app/uploads/linkedin-logo-120x90.jpg)