Algorithms have taken over. They are in charge of making more and more decisions. More and more complex decisions affect all aspects of our lives. But algorithms are not perfect. They also make mistakes. So, a question arises: do we forgive algorithms more easily than human beings for the same mistake? Research from 2021 sheds new light on this question and provides essential insights into the ideal characteristics of algorithms and chatbots.

Contact us for your data project

If you only have 30 seconds

Research published in 2021 shows that we are more forgiving of mistakes made by algorithms than those made by humans.

By applying the “theory of mind perception,” the authors of the research also show that the “human” character of the algorithm varies our judgment. The more the user perceives the algorithm as “human,” the less he forgives its mistakes.

From a branding perspective, algorithmic mistakes generally cause less damage to the brand than human ones.

These results should encourage companies not to “humanize” their algorithms. This recommendation is also made in a recent opinion issued in France by the ethics committee on the challenges posed by chatbots.

2 examples of algorithmic errors

The nature of algorithms is to make many mistakes. Since algorithms rely on historical data to predict the future, any missing historical event will inevitably cause a prediction error.

The vast majority of algorithmic errors go unnoticed. However, some make headlines because of the ethical or financial issues they raise.

Google’s search results

Google’s algorithm has already been in the news on many occasions because of results that are, to say the least, curious. In particular, it has been accused of racism after Kabir Ali discovered that the images associated with the search “three black teenagers” differed significantly from those displayed for “Three white teenagers.”

The Apple credit card

The criteria for the credit card launched by Apple in partnership with Goldman Sachs were accused of following rules that discriminated against women. Although an investigation by the authorities later overturned the accusations, the damage was done. The algorithm had been accused of sexism.

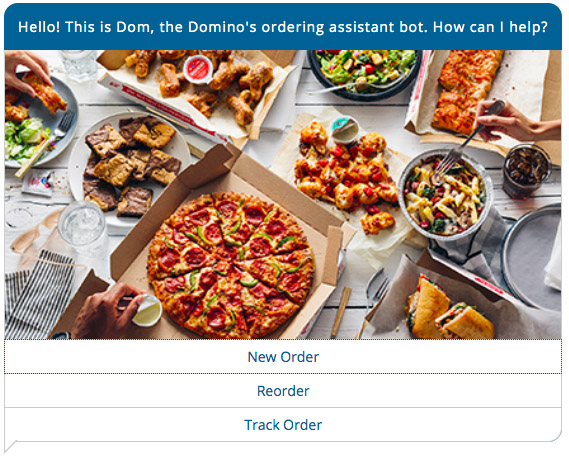

Credits: a screenshot of the Domino’s Pizza website

Why we forgive algorithms more easily than humans

Through more scenario-based experiments, the research generally shows that a human error is judged to be more serious than an error made by an algorithm. The non-human nature of the algorithm makes us “pass the buck” more easily. In terms of branding, a brand will suffer less from an algorithmic error than a human error.

Giving human characteristics to a machine is called anthropomorphizing. The research shows that the boundary is erased by giving human characteristics to the algorithm, and our judgment is altered. The more human the algorithm is, the more responsibility we attribute to it in case of error. These characteristics can appear in different forms:

- a first name

- a surname

- a voice

- an image

This effect increases even more when it is a machine learning algorithm.

Conclusion

Algorithm designers have a vested interest in not giving their creations human characteristics. In case of an error caused by the algorithm, the company’s reputation will suffer less than if the algorithm has human characteristics.

![Illustration of our post "LinkedIn remains under-used by marketing managers [Research]"](/blog/app/uploads/linkedin-logo-120x90.jpg)