In this comprehensive guide, I have synthesized the results of about fifty academic sources on the effect of reminders on online survey participation. You won’t find a more complete guide on the web.

An online survey without follow-up reminders averages a response rate of 25–30%. Managing reminders properly is therefore essential, especially when conducting a customer satisfaction survey. In this guide, I have summarized results of major academic studies on reminders in web surveys: when to send them, how many to schedule, how to write them, and what effects to expect on data quality. And if you still have unanswered questions, I am available to answer them. Also consider reading my analysis on factors influencing participation in online surveys to get a complete overview of the topic.

Key takeaways

- Without reminders, an email survey achieves on average 25–30% response rates; reminders can double this rate.

- Beyond 2 to 3 reminders, gains become negligible and the risk of being classified as spam increases.

- Varying the wording from one reminder to another increases response rates by +36% compared to repeating the same message.

- Reminders do not degrade response quality and do not change the socio-demographic structure of the sample.

- In longitudinal panels, too many reminders in a given wave may reduce participation in subsequent waves.

Why reminders are essential in a survey campaign

Among all available levers to improve participation in an online survey, the number of contacts with potential respondents is the most well-documented factor. The meta-analysis by Cook, Heath & Thompson (2000), focusing exclusively on web surveys, ranks this factor 1st out of 15 tested variables.

The impact of reminders on participation rates

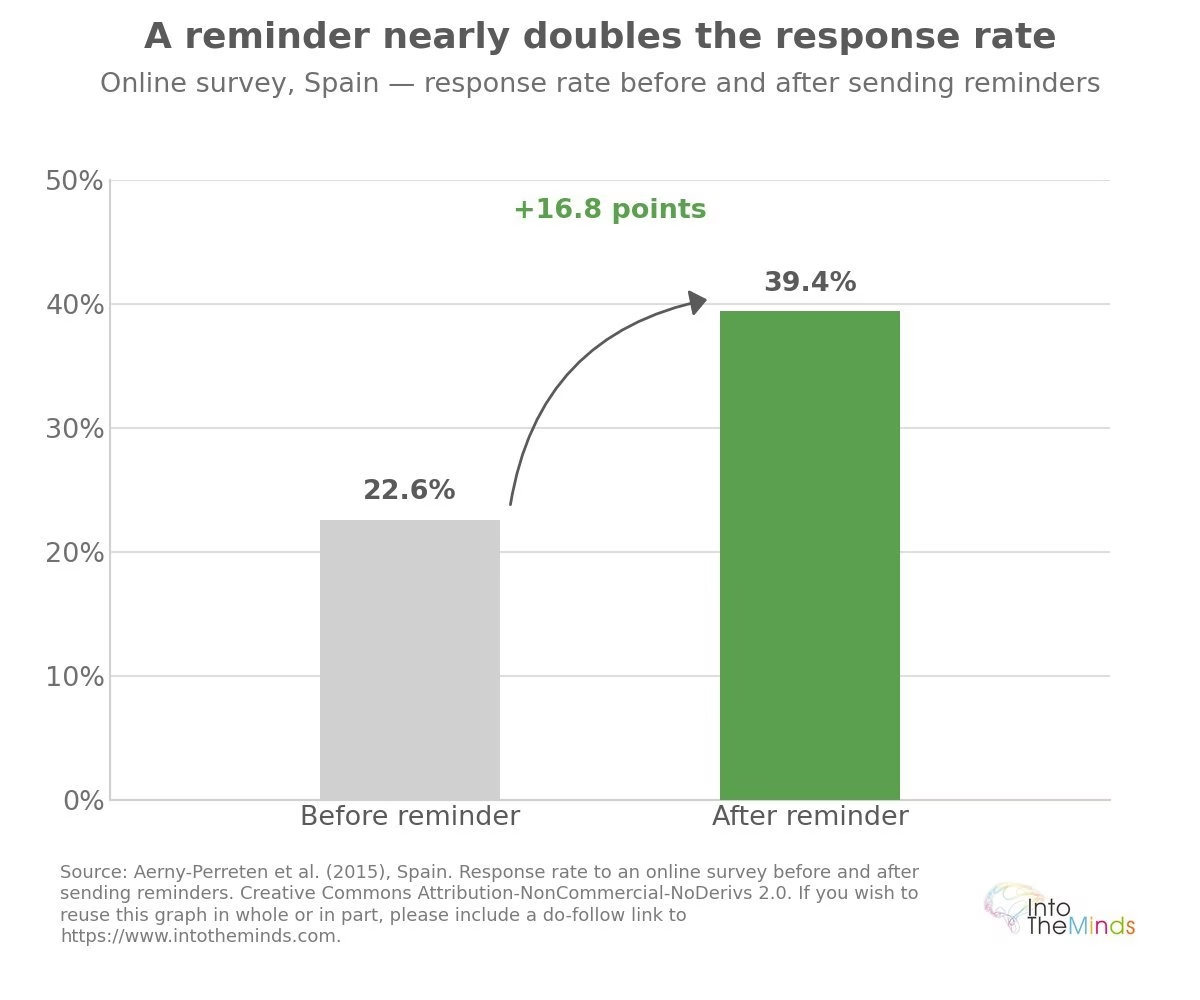

The figures are consistent across studies. Sheehan & Hoy (1999) estimate a typical 25% increase in participation due to additional contacts. Aerny-Perreten et al. (2015) report an increase from 22.6% to 39.4% after sending reminders in their protocol. In an experimental sample of more than 24,000 people, Sauermann & Roach (2013) confirm that each of three successive reminders produces a significant increase in response rate.

The table below summarizes the main available data on the effect of reminders across studies.

| Study | Context | Response rate without reminder | Response rate with reminders | Gain |

|---|---|---|---|---|

| Kittleson (1997) | Email surveys | 25–30% | ~50–60% | ×2 |

| Aerny-Perreten et al. (2015) | Web survey, Spain | 22.6% | 39.4% | +16.8 pts |

| Sheehan & Hoy (1999) | Online surveys | N/A | N/A | ~+25% |

| Blumenberg et al. (2019), Brazil | Longitudinal panel, 5 waves | 31.2% (5th wave) | 70.0% (first 2 waves) | Variable |

| Esomar Access Panel (France, 2003) | Online panel, summer 2003 | 57% (vs 65% usual) | +21% respond after reminder | Selective effect |

Risks of a no-reminder strategy

Risks of a no-reminder strategy

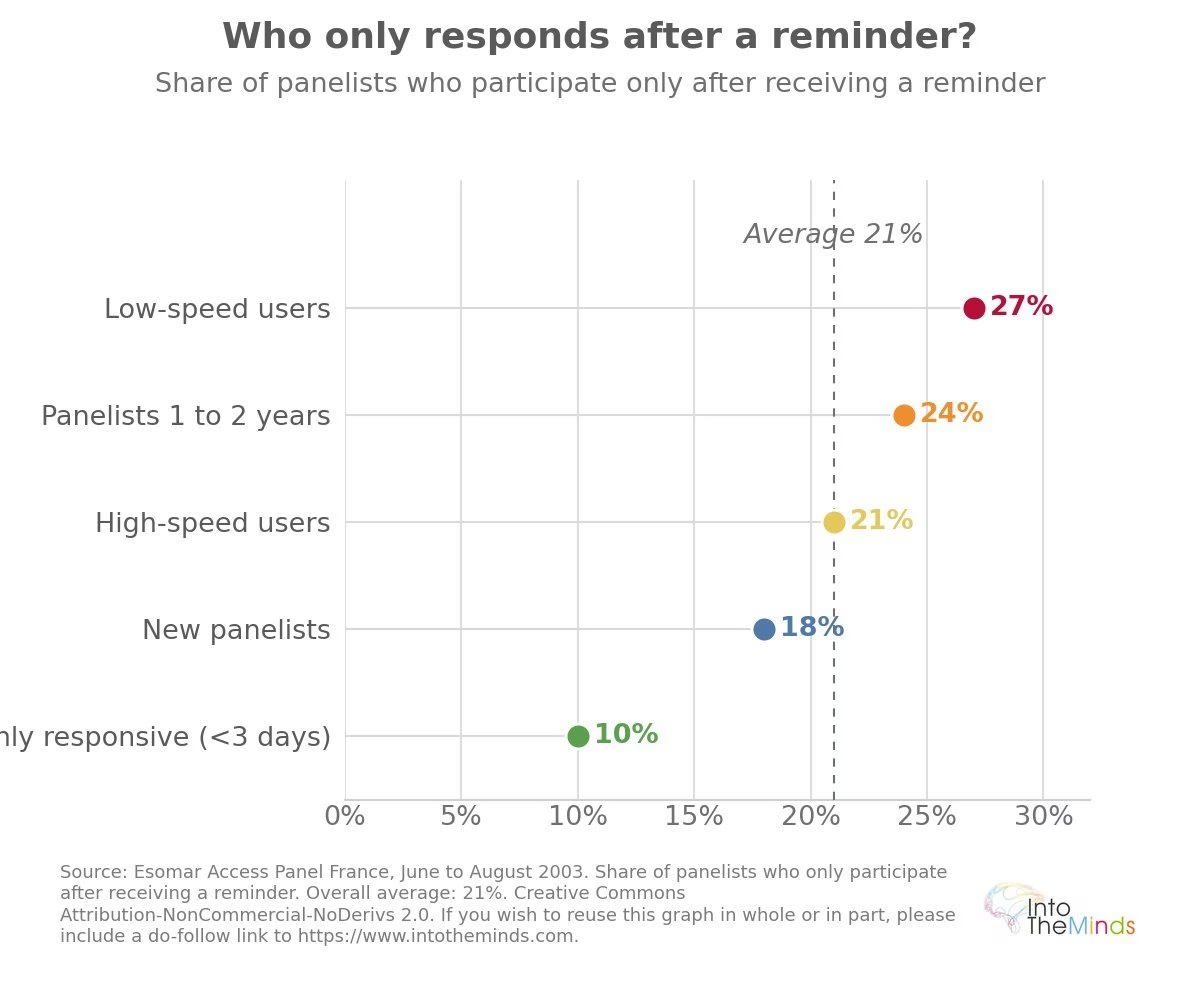

Not sending reminders means accepting the loss of a significant share of respondents who would have participated with a simple follow-up email. Data from the French Esomar Access Panel (summer 2003) shows that 21% of panel participants only respond after being reminded. Among dial-up users, this proportion rises to 27%. In other words, the absence of reminders does not only reduce response rates: it introduces a behavioral bias into the sample by under-representing less responsive profiles. This study is of course old (2003), and the difference between high-speed and dial-up connections would no longer be relevant today.

When should you send reminders?

The question of optimal timing for reminders is surrounded by misconceptions. Available scientific data helps clarify several of them.

The ideal timing for a first reminder

Sauermann & Roach (2013) find no significant difference in response rates depending on whether the first reminder is sent 7 or 21 days after the initial invitation. However, Dillman (2000) recommends not waiting too long: send a reminder as soon as the flow of spontaneous responses slows down, typically about one week in online surveys. This short delay is justified by the nature of the channel: the average response time for a web survey is 5.59 days, compared to 12.21 days for postal surveys (Ilieva, Baron & Healey, 2002).

Regarding precise timing (day, sending hour), the results are clear: sending time has no measurable effect on the final response rate. However, it does affect response speed: an evening send generates a median response delay of around 12 hours, compared to 3 to 4 hours at other times of the day. Lindgren et al. (2018), based on two experiments conducted on a Swedish panel totaling more than 47,000 participants, confirm that timing effects fade within less than a week.

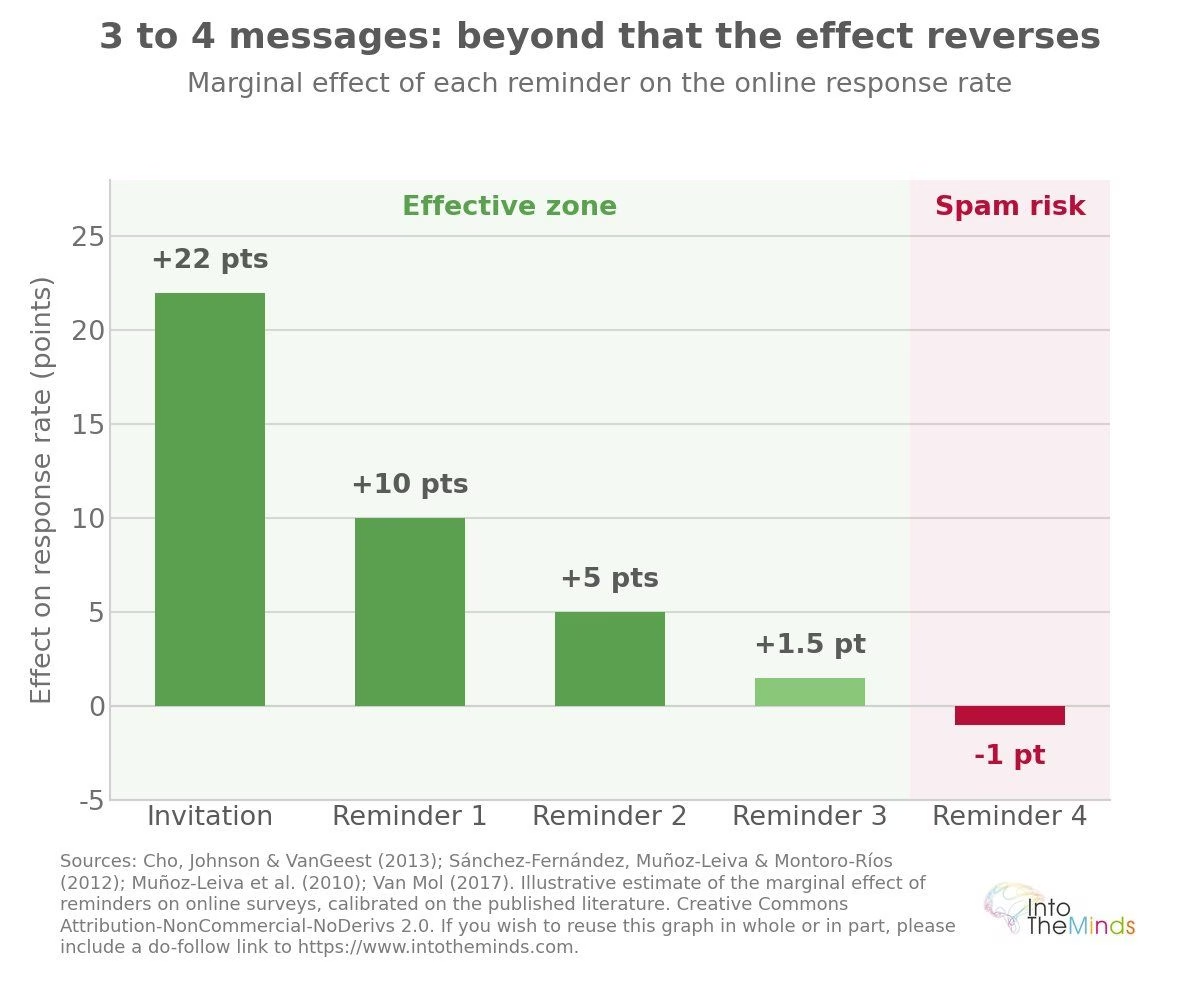

How many reminders should you actually send?

The literature converges on a ceiling of 3 to 4 total messages, including the initial invitation and final notification (Muñoz-Leiva et al., 2010; Van Mol, 2017). Here is what studies indicate depending on the number of reminders:

- 0 reminders: response rate capped at 25–30% for a standard email survey

- 1 reminder: near-universal gain; worthwhile in almost all contexts

- 2 reminders: maximized gains; Sánchez-Fernández et al. (2012) observe no significant improvement beyond this point on a sample of 4,512 internet users

- 3 or more reminders: Cho, Johnson & VanGeest (2013) find a higher response rate with two reminders than with three; the risk of being flagged as spam increases significantly

In our case, we apply a maximum of two reminders in our online studies. This rule, which I imposed on project managers, applies both to panel respondents and to clients in customer-based studies (e.g., satisfaction surveys).

Spacing reminders: best practices

Spacing reminders: best practices

The study by Blumenberg et al. (2019), conducted on 1,277 participants followed across five questionnaires, provides a clear result: a reminder frequency of every 15 days produces a higher response rate than a 30-day frequency, with no signs of perceived overload among participants. For one-off surveys, a reminder 7 to 10 days after the initial invitation remains the most consistent practice with available evidence.

How to set up effective automated reminders

Setting up automated reminders in a survey is not limited to scheduling a send date. Several parameters directly influence their effectiveness.

Steps to implement automated reminders

- Define a data collection deadline far enough to allow at least one reminder

- Send the initial invitation via personalized email so non-respondents can be identified

- Schedule the first reminder after the decline of spontaneous responses (around 7 days)

- Plan a second reminder if the target response rate is not reached, with different wording from the first

- Do not exceed 3 to 4 total messages, including the initial invitation

Personalizing reminders based on respondent profiles

Data from the Esomar Access Panel show that reminder effects are not uniform across profiles. Panel members with 1 to 2 years of tenure are 24% likely to respond after a reminder, compared to 18% for members with less than one year. Highly responsive panelists (typically replying within less than 3 days) respond in 90% of cases without needing a reminder.

These gaps suggest that behavioral segmentation can optimize reminder targeting and avoid contacting respondents who would have replied anyway. In 2026, the use of generative AI enables an unprecedented level of personalization at relatively low cost.

Using smart reminders to optimize results

Using smart reminders to optimize results

A counterintuitive finding deserves attention: changing the wording of successive reminders, without changing their informational content, increases response odds by +36% according to Sauermann & Roach. The hypothesis is that variation distinguishes the message from automated emails and signals genuine human effort. Van Mol (2017) confirms that improving reminder content is more effective than simply adding more identical messages.

Common mistakes to avoid with reminders

Too many reminders: when does frequency become counterproductive?

Beyond a certain threshold, recipients start classifying messages as spam (Birnholtz et al., 2004; Porter & Whitcomb, 2003). This explains why web survey reminder effectiveness reaches saturation earlier than postal or telephone modes (Lozar Manfreda et al., 2008). For longitudinal panels, the issue is even more structural: Göritz & Crutzen (2012) warn that reminders increase participation in a given wave but may reduce willingness to respond in subsequent waves. Optimization therefore cannot be limited to immediate response rates.

Neglecting respondent segmentation

Sending the same reminder at the same time to all non-respondents is suboptimal. Available behavioral panel data (historical responsiveness, connection type, tenure) can identify profiles that genuinely benefit from reminders. Targeting only these groups reduces send volume while maintaining overall campaign effectiveness.

Ignoring communication preferences

Socio-demographic characteristics (age, gender, income, education level) are not correlated with whether respondents reply before or after reminders: reminders therefore do not change the final sample’s demographic structure. However, behavioral profiles (past responsiveness, usage patterns) are significantly correlated. Ignoring these variables means treating as identical respondents whose response behavior to reminders is structurally different.

FAQ: the questions you are asking

What is the ideal interval between two survey reminders?

A reminder frequency of 7 to 15 days is the range that most consistently emerges from available studies. Blumenberg et al. (2019) show that a 15-day interval produces better results than a 30-day interval. For one-off surveys, sending the first reminder about one week after the initial invitation is consistent with an average online response time of 5.59 days.

Do automatic reminders really increase responses?

Yes, in a documented and reproducible way. Studies converge on an average gain of around 25% in participation thanks to reminders, and some protocols even show a doubling of response rates compared to an invitation without follow-up. If you conduct opinion surveys or customer satisfaction surveys, sending at least one reminder is justified in almost all contexts.

How can I avoid my reminders ending up in spam?

Research identifies two main levers. First, limit the total number of messages to 3 or 4, including the initial invitation. Second, vary the wording of each reminder: different subject lines and message bodies signal a human, non-automated send, which reduces filtering risk. Porter & Whitcomb (2003) and Birnholtz et al. (2004) identify repetition without variation as the main spam trigger.

Can reminders be personalized based on respondent behavior?

Yes, and data suggest this is more effective than sending uniform reminders. A respondent’s historical responsiveness (reply delay in previous studies) is a strong predictor of their dependence on reminders. Highly responsive panelists do not need reminders: 90% respond spontaneously. Targeting reminders at less responsive profiles optimizes the balance between send volume and participation gain. For your B2B market research or B2C studies, this behavioral segmentation improves both quality and efficiency.

Do reminders affect response quality?

No, according to available data. Sánchez-Fernández et al. (2012) find no significant effect of reminder frequency on missing data (F = 0.36; p = 0.779). Sauermann & Roach (2013) reach the same conclusion: the increased response rate achieved through reminders does not lead to a deterioration in data quality. This removes one of the main objections to using reminders in protocols for brand awareness surveys or satisfaction studies.

![Illustration of our post "Remote work: are employees cheating? [Survey]"](/blog/app/uploads/telework3-120x120.png)