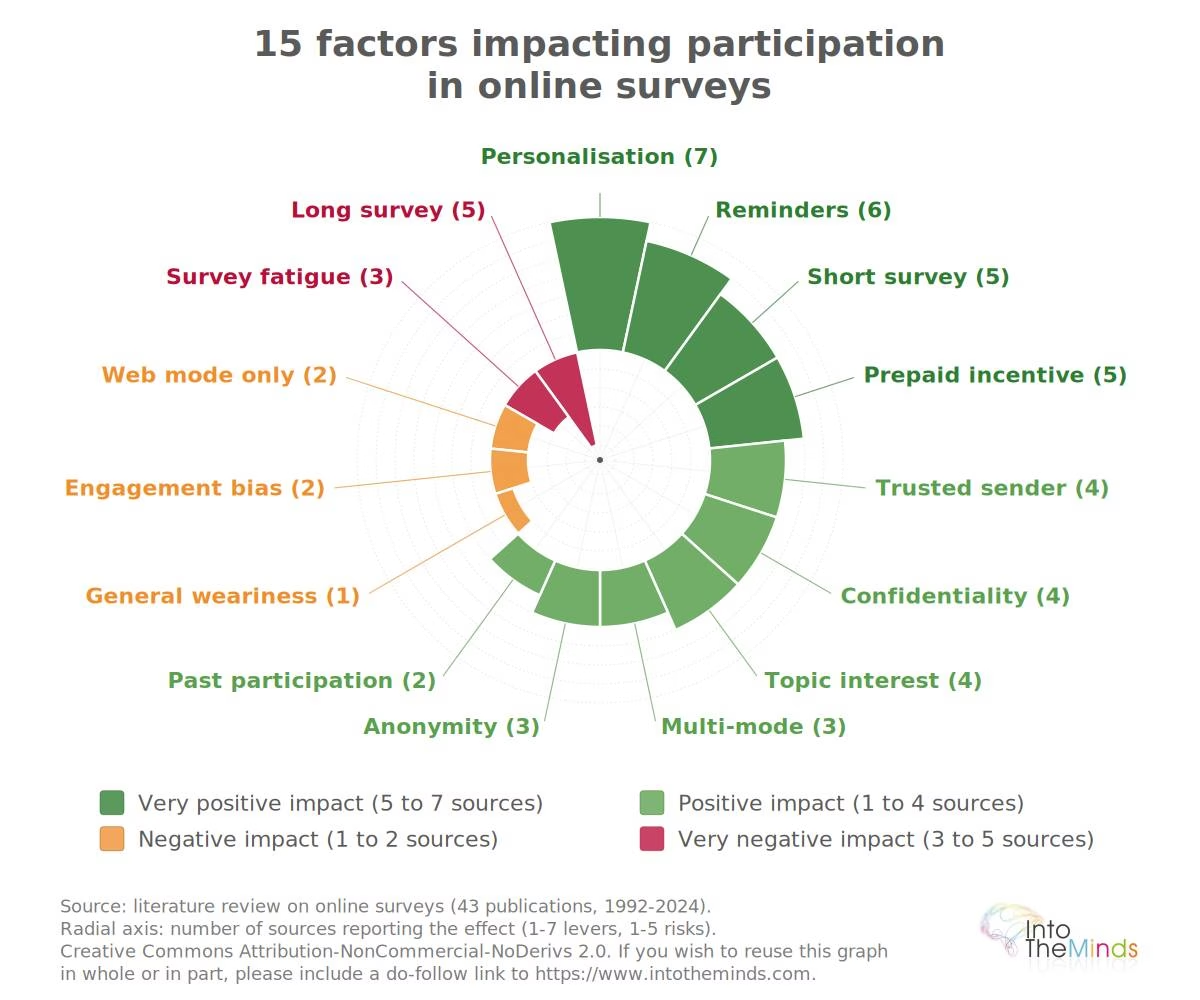

I analyzed 50 scientific studies and in this article, I explain the 15 factors that most influence participation in an online survey. 10 have a positive effect and 5 have a negative effect.

Our survey institute conducts several hundred surveys each year, and the factor that varies every time is the participation rate. I had already discussed in a previous article the differences between “professional” respondents and occasional respondents. In today’s article, I wanted to go one step further by carrying out a comprehensive analysis of the factors that influence participation in online surveys. To do this, I analyzed around fifty scientific studies covering a period of approximately 30 years. I hope the conclusions I share with you will be useful.

Contact the IntoTheMinds survey institute

Key takeaways

- The announced duration matters more than the actual duration: moving from 30 to 10 minutes announced increases the percentage of people who start the survey from 63% to 75%

- Dropout rates are 31.8% for a 10-minute survey, 43.2% for 20 minutes, 53.2% for 30 minutes.

- Personal interest outweighs incentives: 88% of people respond if the topic concerns them, compared to 76% for a reward

- Well-crafted reminders can double your responses: from 23% to 39% with an appropriate follow-up strategy

- Trust in the sender makes all the difference: 89% accept a request from a known colleague versus 40% when it comes from a stranger

- Forcing responses drives people away: dropout increases from 10% to 35% with mandatory questions

Context: a growing challenge for digital surveys

Online surveys now dominate the quantitative research market, representing more than 25% of all surveys according to ESOMAR. This dominance does not translate into outstanding response rates. A recent meta-analysis of 1,071 questionnaires puts the average at 44.1%, with significant variation (Wu, Zhao & Fils-Aime, 2022).

However, my review of academic publications allows me to add a note of optimism. Across 1,000 surveys published in 17 major journals, response rates have increased over the years:

- 48% in 2005

- 53% in 2010

- 56% in 2015

- 68% in 2020

For more information, you can consult this article: Holtom, Baruch, Aguinis & Ballinger (2022). This gradual improvement may indicate that those conducting online surveys are increasingly understanding how to reduce dropout rates, which mechanically increases participation rates. That’s exactly the focus of this article. I review the 15 main factors that truly impact participation. I have classified them into two categories: those that increase participation and those that hinder it. You will also find them in the chart above. One clarification before you dive into the analysis: the 50 academic sources I relied on identify far more than 15 factors. To remain practical, I focused on the 15 most important ones.

Positive factors: 10 levers that improve participation

The factors below increase the likelihood that a person agrees to respond to an online survey. They are ranked by strength of evidence, meaning the number of converging sources reporting the effect.

Personalization of invitations

Personalizing invitations is a classic recommendation, but its actual impact remains nuanced. Including the recipient’s first name in the email helps slightly, but to be clear, the effect on response rate is limited.

Two interesting insights emerge from the academic studies I reviewed:

- An empty subject line in the invitation email performs better than including “Survey” (Porter & Whitcomb, 2005). Curiosity likely drives people to open the email.

- An email signed by a woman receives almost twice as many responses as one signed by a man.

But effective personalization goes beyond simply adding the recipient’s first name. You need to:

- adapt the message to the recipient’s context

- use their language

- refer to their specific concerns

A few years ago, this would have required significant effort. Today, however, with tools such as LLMs, this level of personalization is accessible to everyone.

Reminders and follow-ups

Follow-up strategies are a classic but effective way to improve participation. The marginal cost is almost zero, while the gains are substantial. There is therefore no reason to hesitate.

Empirical data is clear. A Spanish study saw its response rate increase from 23% to 39% thanks to follow-ups (Aerny-Perreten et al., 2015). Another went from 6% after the first send to 31% after the fourth reminder.

The effectiveness of reminders lies not in their number, but in their wording. Simply changing the text of each follow-up, without adding new information, increases the likelihood of response by 36% (Sauermann & Roach, 2013).

The delay between reminders (7, 14, or 21 days) has no measurable effect. However, beyond three or four reminders, you risk annoying more than convincing (Muñoz-Leiva et al., 2010). In our case, when conducting a survey on a client base, our policy is to send 2 reminders: the first after one week, the second after two weeks.

Short survey

Estimated duration is the first filter in the decision to participate. Experimental research shows a counterintuitive phenomenon: it is not the actual duration that matters, but the one you announce.

A comparative study reveals significant differences depending on the stated duration. When participants see “10 minutes,” 75% start the questionnaire. This proportion drops to 63% for “30 minutes announced.” The surprising detail? The actual duration was around 40 minutes in both cases (Galesic & Bosnjak, 2009).

Other research confirms this trend. Surveys announced as 10 to 20 minutes obtain 30% response rates, compared to only 19% for those presented as 30 to 60 minutes (Marcus et al., 2007). A Greek study even identifies a critical threshold around 13 minutes: beyond this, participation drops significantly (Andreadis & Kartsounidou, 2020). When respondents are directly asked about their motivations, the top reason cited (average score 4 out of 5) remains: “it took less than half an hour” (Park, Park, Heo & Gustafson, 2019).

Prepaid incentive

Not all financial incentives are equal, and the most important nuance concerns the timing of the payment. A gift given upfront, without conditions, consistently works better than one promised upon completion. The psychological mechanism at play is reciprocity: a person who receives a small bill in their invitation envelope feels somewhat indebted, and this symbolic debt weighs more in the decision to respond than the abstract promise of a future reward (Singer & Ye, 2013).

Meta-analyses quantify the gap: a prepaid monetary incentive increases postal response rates by 19 percentage points on average, compared to only 8 points for a non-monetary gift of equivalent value. Online, the principle is harder to replicate since you cannot include cash in an email. A direct experiment with PayPal also shows that electronic prepayment does not create the same advantage as postal methods (Bosnjak & Tuten, 2003). A recent study further confirms that delayed payment destroys motivation: 86% of British students surveyed said they would not respond if the reward arrived four weeks later (Stoffel et al., 2023).

Dropout rates are 31.8% for a 10-minute survey, 43.2% for 20 minutes, and 53.2% for 30 minutes.

Forced responses and technical frictions

Some practices, although intuitive, prove counterproductive. The “mandatory response” option is one of them. The idea seems logical: forcing every answer avoids missing data. However, empirical reality shows the opposite.

The figures show that forcing responses generates 35% dropout, compared to only 9–10% when answers are optional. But it gets worse: if you include a mandatory open-ended question, 7.5% of respondents leave immediately (Décieux et al., 2015).

This mechanism appears whenever you introduce a “friction point” in your survey (for example: requiring a password, creating an account, verifying an email address, or asking for a city of residence). These elements cause participants to drop out (Lavidas et al., 2022).

Survey fatigue

The accumulated fatigue of over-surveyed respondents eventually reduces both response rates and data quality. In professional panels, where some participants receive multiple invitations per week, this phenomenon has long been documented.

At the individual level, “survey fatigue” manifests as:

- shorter open-ended responses

- increased straight-lining in grid questions

- more “don’t know” answers

- lower response rates

If you regularly survey your customer base (e.g., through satisfaction barometers), you should therefore space out requests.

Web-only mode

Relying solely on the web channel to collect responses reduces participation rates. Web surveys score on average 12 percentage points lower than strictly comparable postal, telephone, or face-to-face surveys (Daikeler, Bošnjak & Lozar Manfreda, 2020). This gap does not diminish over time, despite increasing internet penetration.

Web mode also has a specific drawback: ease of abandonment. Unlike phone or face-to-face surveys, closing a browser tab requires no effort. For surveys with a general invitation, dropout rates can reach 80%, with an average around 30% (Lozar Manfreda et al., 2008).

Engagement bias

Online questionnaires over-represent certain profiles: politically engaged individuals, highly educated respondents, and frequent internet users. Conversely, ethnic minorities, younger people, and less connected populations are under-represented. This self-selection affects the validity of results.

A recent study on 11,000 panelists recruited via Facebook and Google highlights the scale of the issue: a response rate of about 0.4%, strong over-representation of politically engaged profiles, and chronic under-representation of minorities and younger people (Hopkins & Gorton, 2024). We previously covered another study on the same topic, which showed that social media samples can have errors up to 17%.

Representativeness therefore matters more than raw response rate. A high response rate does not guarantee a good sample, and a low response rate does not automatically imply bias, as long as respondent profiles remain aligned with the target population.

Frequently asked questions

How do I calculate the optimal duration to announce for my online survey?

The announced duration should stay under the 13-minute threshold to maintain a high participation rate. At IntoTheMinds, we advise our clients to stay under 9 minutes (which corresponds to roughly 25 closed-ended questions).

If your questionnaire actually takes 20 minutes, it is better to announce “about 15 minutes” and optimize the interface to speed up completion. The key is to respect the commitment made: if you announce 10 minutes, ensure that 80% of respondents finish within that time.

Is it better to offer a reward or rely on interest in the topic?

Personal interest in the topic generates 88% participation compared to 76% for a financial incentive. First invest in precise audience targeting. Financial incentives work as compensation for less engaging topics, but they do not replace strong thematic relevance. For B2C market research, prioritize relevance for respondents.

How many reminders can I send?

A maximum of three to four reminders, each with varied wording. Changing the text without adding new information increases responses by 36%. The timing between reminders (7, 14, or 21 days) has no impact on results. Beyond four reminders, you risk annoying rather than convincing. For opinion surveys, this spaced reminder strategy is particularly effective.

How can I improve trust in my survey invitations?

The sender’s identity makes a major difference: 89% respond to a known colleague versus 40% to an unknown sender. Use personalized email addresses, sign with a name and role, and add a photo if possible. Promising confidentiality increases responses by 33%. Avoid requesting personal information in the invitation (address, phone number, identifiers).

What technical mistakes cause respondents to drop out?

Forcing responses increases dropout from 10% to 35%. Avoid mandatory questions, especially personal open fields. Every technical friction point (passwords, account creation, email verification) leads to participant loss. Prefer direct access via a unique link. For customer satisfaction surveys, simplicity is critical to maintain engagement.

How do I detect and prevent fake respondents in paid surveys?

Include trap questions, check IP addresses to detect duplicates, and analyze unusually fast completion times (less than 2 seconds per question). Look for suspicious clusters of submissions within the same hour or region. Use CAPTCHAs to block bots. Assume that a fraction of paid responses may be invalid and plan for a 10–15% oversampling.

![Illustration of our post "In-store digital: customers want efficiency [Survey]"](/blog/app/uploads/flagship-store-lacoste-paris-champs-elysees-14-120x90.jpg)

![Illustration of our post "Generation Z and work: employers’ perceptions [Research]"](/blog/app/uploads/generation_y_youngsters-120x90.jpg)