Everyone is worried about the rise of chatGPT and the revolution it announces. Google has even called back its 2 founders to help counter the threat of conversational robots. Indeed, all the uses around information search (and therefore Google’s business model) are likely to be turned upside down. However, classic search should not be buried. On the contrary, in some contexts, classical search engines have advantages that generative AI will never have. I researched 5 contexts and compared the performance of chatGPT and Google. Google wins in 3 cases out of 5.

The biggest danger of ChatGPT and generative AI is to transfer your decision power to them.

The main ideas to remember

- Generative AI provides a superior user experience to a traditional search engine in the following cases:

- answering a closed-ended question

- carry out comparisons between facts

- help with a simple decision (choose between A and B)

- Traditional search engines offer more value in complex cases such as

- interpretation of data

- complex decision making

- fuzzy search (search for a solution not known in advance)

- A classic search engine offers an experience that enriches the user.

- serendipity: the user can discover information that broadens their potential and understanding

- the mix of textual and visual information allows the brain to integrate signals of different natures that help in the construction of thought

- the great danger of generative AI is delegating its decision power to it. In complex situations, chatGPT and its competitors will direct you to a solution that is not necessarily best for you.

- The danger of generative AI is that it only proposes one answer. In doing so, they eradicate any possibility of “serendipity,” i.e., the chance discovery of additional information. Thinking and decision-making are thus impoverished.

Summary

- The nature of the queries determines the interest in generative AI

- Conclusion: The danger of chatGPT is to delegate its decision power to it

The nature of the queries determines the interest in generative AI

To properly analyze the threat that the GPT-3 model represents for Google, we first need to take a little distance from what an online search is. We use the internet to answer and find solutions to various problems. The enthusiasm about the potential of ChatGPT needs to be assessed against this diversity of contexts.

| Query type | Examples | Generative AI (chatGPT, …) | Classic search engine | Winner |

| Simple (closed-ended) question | Where was X born? When did Y take place? | + immediate answer in the form of a sentence + only one sentence to read – no possible questioning of the information presented | + several documents proposed with the answer – potentially different answers for the same question | ChatGPT |

| Simple comparison | Which is the biggest mountain: X or Y ? How do the 2 phones, X and Y, compare? | + immediate answer in natural language + formatting of a complex answer | – potentially, several web pages will have to be browsed to answer this question – no simple answer to the question | chatGPT |

| Interpretation of data | What are the key success factors in this market? | + proposal of possible answers – superficial or even erroneous answers – lack of contextualization | + potential correct answer if a recent web page exists on the subject + better view of the subject thanks to the consultation of several web pages – need to browse several pages to get a first idea of the subject | |

| Fuzzy search | How to reduce the management costs of my supply chain? How to improve customer satisfaction? | + proposal of answers – no guarantee of completeness – several pages on the same subject will have to be read and synthesized | + acquisition of new knowledge and increase in competence thanks to the reading of several pages + better mastery of the subject | |

| Complex decision making | Choosing a partner to manage your supply chain? Choosing a market research firm | + quick first selection – potentially biased selection – often irrelevant results – no weak signals (website ergonomics, visuals, other information) – several websites have to be browsed | + the user keeps control over the selection of options for his choice + weak signals (quality of the website, visuals, etc.) are taken into account to form the decision + results are almost always relevant, even in localized contexts |

Context 1: Find a precise answer to a closed-ended question

Who was Carlo Crivelli? What is remarketing? When did the battle of Marignano take place? These questions have in common that they require precise answers based on facts.

This ability to propose concise natural language formulations will likely boost interest in connected speakers. Where Google will propose pages that contain the answer for sure, chatGPT will compose a summary for you. This is ideal for students who only want to read up to one page and those who don’t have time.

Winner: chatGPT

Context 2: Answer a comparative question

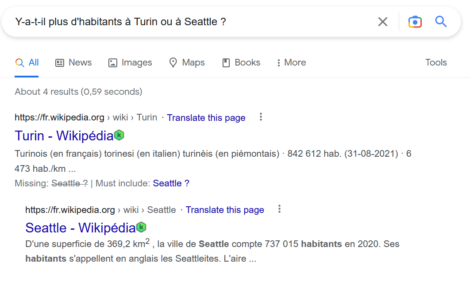

Are there more people in Turin or Seattle? Is the city of Phoenix further north than Marrakech? These questions may seem trivial, but Google has only one way to answer them besides proposing a series of web pages to consult.

In the first case, Google proposes the extracts of 2 Wikipedia pages which allow us to conclude that Turin is more populated than Seattle.

ChatGPT answers the question directly so that the user doesn’t have to think about it anymore.

What is impressive is that the generative AI allows you to make a difficult comparison, a benchmark. This assumes, of course, that you know what to compare. Here is an example obtained with the “conversation” mode of the Bing search engine.

Winner: chatGPT (and generative AI in general)

Context 3: Interpreting facts

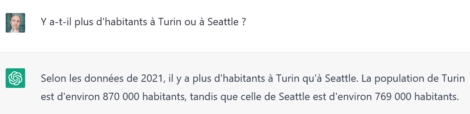

Human intelligence is the only one that can currently assimilate scattered data to interpret it. It gets challenging to answer complex queries that involve interpreting data rather than just comparing it. ChatGPT, or any generative AI, will provide a well-written and well-structured answer, but it will only sometimes be right. Let’s look at chatGPT’s answer to my question about the success factors of a ready-to-wear brand.

None of the propositions provided by chatGPT correspond to the reality of the French market. If we disregard the high-end/luxury segment, there are currently only 2 strategies that work in ready-to-wear:

- low prices

- ultrafast renewal of collections

This explains why the textile sector in France is undergoing a real slaughter. Poorly positioned (mid-range), it cannot resist the power of fast fashion (Zara, H&M) and ultra-fast fashion.

On the Google side, this type of query returns different pages. The first one is particularly relevant because it includes a PESTEL analysis that applies to the French context. The information elements are also much more detailed, which enriches the user’s thinking. The peripheral elements of information enrich his reflection and guide his further research.

I repeated this type of research in many contexts, and Google always offered the most added value.

Winner: Google

Contexts 4 and 5: Random search and complex buying decisions

An online search does not necessarily start with a specific query. When the situation is complex, each search helps build the next one. It is an iterative process.

The risk posed by generative AIs is that they do not know this “random” logic. They aim to immediately provide you with a “definitive” answer to a question. It is an intellectual shortcut that drags the construction of human thought down.

Random search is particularly applicable to complex buying decisions in B2C and B2B. Consider the following cases:

- you are looking for a new car

- you are looking for ways to improve your supply chain management

- you want to find more customers for your business

- you need to do market research (example taken totally at random ?)

In these cases, your research will probably take several hours, days, or even weeks. You need to know in advance what the solution will look like. You have to go through a learning phase to make the best choice. Paradoxically, searching for diverse and varied information allows you to enrich yourself intellectually, close some doors, and open others.

We build our choices based on information gleaned here and there and on weak signals sent to our brain by the website itself. Your brain scans a web page and integrates the images, the titles, and the possible videos. These visual elements currently need to be included in chatGPT’s answers, yet they are essential to the construction of the decision.

“If it’s difficult, it means that we learn something.” With chatGPT, everything is too easy.

Let’s take a concrete example. I have seen several times examples of tourist itineraries generated by chatGPT. Some people were already announcing the end of travel guides. I can only disagree completely. Of course, chatGPT can perfectly interpret your question and propose a “solution” as a travel itinerary (see the example below). But is it really what you want? Is there more value in searching for yourself, getting lost in a travel guide while learning more about your destination country, rather than asking for a ready-made solution? I tell my son, “If it’s difficult, it means you are learning something.” With chatGPT, everything is too easy. And by the way, is the “solution” proposed by chatGPT a solution? The answer of chatGPT to my request (see screenshot below) is unrealistic. Considering the distances in Iceland (I went there, see this post), this program is only feasible in some conditions.

As you can see, finding an online solution to a complex problem is an iterative process. And this is where the illusion of chatGPT and its danger lies.

Winner: Google

Conclusion: The danger of chatGPT is to delegate your decision power to it

ChatGPT gives us the illusion that it can deliver immediate answers to all our problems. If this is true in simple cases, I hope to have made you aware that solutions to the most complex problems are built iteratively. More interestingly, the very process of searching for information enriches us because:

- it forces us to expand our perspective on a situation and to consider a multitude of potential solutions

- it allows us to acquire new knowledge by actively seeking the best one

Therefore, searching on Google seems to me to be much more beneficial than chatGPT. The search heuristic allows the user to grow intellectually. He learned new things, was able to consider solutions he “stumbled upon” by chance (this is serendipity), and made an informed decision. To use a metaphor, chatGPT allows us to look through the keyhole, and that classic search engines allow us to step back.

I criticize chatGPT because it strips the Internet user of his decision power. ChatGPT proposes an answer (at best incomplete, at worst false) to questions that are sometimes too complex. This shortcut deprives us of our ability to make a reasoned decision. In doing so, chatGPT impoverishes us by depriving us of our decision-making power.

![Illustration of our post "Turnover: what strategies are companies implementing? [Study]"](/blog/app/uploads/banner-millenial-employee-120x90.jpg)

![Illustration of our post "Colruyt champion of price changes [Research]"](/blog/app/uploads/pricing-120x90.jpg)